With the world projected to generate a staggering 181 zettabytes of data in 2025, businesses are facing unprecedented challenges in harnessing this valuable asset. Data engineering, the discipline of designing and building systems for collecting, storing, and analyzing data, is the backbone of any successful data strategy.

However, the path to data-driven decision-making is often fraught with obstacles such as scalability, data integration, real-time processing, and maintaining data quality.

This is where Databricks emerges as a transformative solution. As a unified data analytics platform, Databricks is purpose-built to address these challenges head-on. But to truly unlock the full potential of this powerful platform, you need an expert partner.

1. Scalability and Performance

The sheer volume of big data can overwhelm traditional data processing systems, leading to slow performance and an inability to handle large-scale workloads. For many organizations, processing petabytes of data efficiently is a constant struggle.

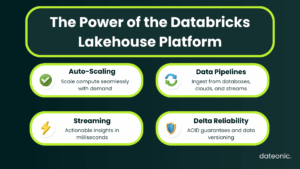

Databricks is built on top of Apache Spark, the leading open-source engine for big data processing.

This foundation allows Databricks to offer unparalleled scalability through features like auto-scaling clusters, which automatically adjust computing resources based on workload demands. This means you only pay for what you use, optimizing both performance and cost.

Benefits:

- Effortlessly process massive datasets, from gigabytes to petabytes.

- Significantly reduce data processing times.

- Achieve high performance for data-intensive tasks like ETL and machine learning.

For a deeper dive into getting the most out of your Databricks clusters, explore our guide on Optimizing Clusters in Databricks: Performance, Cost, and Best Practices 2025.

2. Data Ingestion and Integration

Data is rarely stored in one place. Businesses need to collect and integrate data from a multitude of sources, including databases, cloud storage, and real-time streaming platforms. The variety of formats and structures makes this a complex and time-consuming process.

Databricks simplifies data ingestion and integration with its broad support for various data sources and formats. Tools like Lakeflow Connect provide a seamless experience for bringing data into the Databricks Lakehouse Platform, creating a single source of truth for all your data.

Benefits:

- Streamline the creation of data pipelines.

- Reduce the time and effort required for data integration.

- Accelerate the delivery of actionable insights to business users.

Understanding how to manage changing data is crucial for effective integration. Learn more in our article on What is Change Data Feed (CDF) and How Databricks Helps with its Implementation.

3. Real-time Data Processing

In a fast-paced business environment, the ability to analyze data in real-time is critical for making timely decisions. However, processing streaming data is notoriously complex, and many organizations struggle to keep up with the constant flow of information.

Databricks addresses this with Structured Streaming, a high-level API for building end-to-end real-time data pipelines. It allows you to treat a stream of data in the same way you would a static table, simplifying the development of real-time applications.

Benefits:

- Achieve low-latency data processing for real-time analytics.

- Build responsive applications that can react to events as they happen.

- Gain a competitive edge by making faster, more informed decisions.

The impact of real-time data can be seen across industries. Discover how it’s transforming logistics in our post on How Databricks Can Help Reduce Waste in Logistics.

4. Data Quality and Reliability

The old adage „garbage in, garbage out” has never been more true. Poor data quality, characterized by inaccuracies, inconsistencies, and incompleteness, can lead to flawed insights and misguided business decisions.

The foundation of the Databricks Lakehouse is Delta Lake, an open-source storage layer that brings reliability to data lakes.

Delta Lake ensures data quality through features like:

- ACID Transactions: Guarantees that data operations are atomic, consistent, isolated, and durable.

- Schema Enforcement: Prevents bad data from being written to your tables.

- Data Versioning: Allows you to travel back in time to previous versions of your data for audits or to fix errors.

Benefits:

- Vastly improve data integrity and reliability.

- Simplify data management and governance.

- Build trust in your data and analytical outcomes.

Managing your company’s most important asset – its data is paramount. For more on this topic, see our comparison of the Best 2025 Platforms for Managing Important Company Data.

| Challenge | Traditional Stack | Databricks |

|---|---|---|

| Scaling to Petabytes | Manual config, high costs | Auto-scaling clusters |

| Data Integration | Custom code, vendor lock-in | Lakeflow Connect + open formats |

| Streaming Data | Complex, fragile | Structured Streaming API |

| Data Quality | Limited checks, data corruption | Delta Lake ACID + schema enforcement |

Conclusion

Databricks offers a comprehensive solution to the most pressing challenges in data engineering, from scalability and data integration to real-time processing and data quality.

By leveraging the power of the Databricks Lakehouse Platform, organizations can streamline their data engineering processes and unlock the full potential of their data.

Ready to take the next step in your data journey? Dateonic is the leading consultancy for Databricks implementation, guiding businesses to not just adopt the technology, but to master it.

Contact dateonic today for expert guidance and implementation support. Let us be your trusted partner in building a data-driven future with Databricks.