There is a distinct, visceral anxiety that accompanies a Friday afternoon deployment in a fragile data platform. For many data engineering teams, pushing pipelines to production feels less like a synchronized software release and more like a high-wire act without a net.

Databricks is an undisputed powerhouse. However, we consistently see enterprise organizations unintentionally handicap the platform by treating it as little more than a managed Spark cluster. By relying on basic functionality, they leave the platform’s most advanced deployment and governance capabilities completely dormant.

Scaling a modern data platform isn’t about writing more efficient PySpark code in an isolated cell. It is about treating your data infrastructure with the same rigorous software engineering, CI/CD, and DevOps standards as any tier-one enterprise application.

Here is how organizations hit the deployment wall, and how you can architect a resilient, automated alternative.

Where „Notebook DevOps” Breaks Down at Scale

The frictionless entry point of Databricks is its greatest initial advantage, but it rapidly becomes a liability as your team grows. When organizations fail to graduate from rapid prototyping to enterprise-grade deployments, several architectural fractures begin to show.

The Illusion of Agility

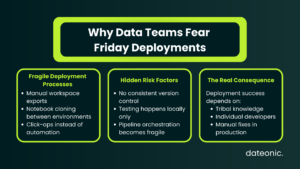

Many teams rely on manual workspace exports, cloning, or „click-ops” to move code between environments. This creates an illusion of agility. In reality, it breeds a deployment lifecycle entirely dependent on tribal knowledge, devoid of version control, and completely unsuited for complex pipeline orchestration.

Shared Workspaces and the „Overwritten Code” Crisis

When multiple engineers operate inside a shared development workspace without strict Git integration and modularized code, chaos ensues. Code gets accidentally overwritten, testing is localized, and platform leads spend hours debugging the classic „it worked on my cluster” syndrome.

The Blind Spot: Data and Infrastructure Separation

The most critical failure in basic setups is separating code environments without properly segmenting the underlying data. If your Development pipeline can accidentally overwrite or drop a Production table, your CI/CD process is fundamentally broken. Without treating data governance as a first-class citizen alongside your infrastructure, your deployments carry unacceptable risk.

Architecting for Scale: The Dateonic Approach

At Dateonic, our philosophy is simple: data pipelines, machine learning models, and infrastructure must be managed as cohesive software projects.

Moving to Databricks Asset Bundles (DABs)

To tap into the platform’s true potential, organizations must transition from ad-hoc notebooks to Databricks Asset Bundles (DABs). DABs allow your team to define data jobs, pipelines, and ML workflows as code. This ensures that every deployment is declarative, thoroughly tested via CI/CD (such as GitHub Actions or GitLab CI), and consistently reproducible across any environment.

True Environment Isolation Driven by Unity Catalog

CI/CD isn’t just about moving code; it is intrinsically linked to data security. The „right way” to manage environments (Dev, Staging, Prod) requires tight integration with Unity Catalog.

By utilizing isolated Service Principals bound to specific environments, we ensure that a Development service account physically cannot access Production catalogs. Row-level and table-level privileges are systematically enforced by architectural design, not by blind trust in a developer’s notebook execution.

The Architecture Comparison

| Feature | The „Notebook DevOps” Way | The Dateonic Architecture |

|---|---|---|

| Code Management | UI-based editing, manual exports | Git-backed Databricks Asset Bundles |

| Deployments | Click-ops, UI cloning, Repos syncing | Automated CI/CD pipelines (GitHub Actions/GitLab) |

| Data Security | Implicit trust, shared access keys | Unity Catalog implementation, strictly isolated Service Principals |

| Rollbacks | Manually reverting notebook versions | Instant, declarative state reversion via Git |

Show, Don’t Tell: Automated Deployments in Action

We believe in showing, not just telling. Check out our public GitHub repository (Dateonic/Databricks-Asset-Bundles-tutorial) for production-grade examples of what we teach. You can explore how we structure DABs, define infrastructure as code, and wire up automated testing.

Databricks Asset Bundles – Hands-On Tutorial. Part 1 – running SQL and python files as notebooks.

Watch how the Dateonic team structures a zero-downtime deployment utilizing Databricks Asset Bundles and Unity Catalog governance.

Building Capability from Within

When CTOs and Platform Leads realize they need to overhaul their deployment architecture, the immediate instinct is to hire external talent.

Here is the industry reality: finding a senior engineer who deeply understands Databricks internals and advanced DevOps principles is nearly impossible. Searching for these „unicorns” can stall your roadmap for six to eight months.

The smartest, most cost-effective strategy is to upskill the internal team you already have. They already understand your business logic, your data models, and your organizational goals. They just need the right architectural playbook.

The Databricks DevOps Training

This is where Dateonic steps in. Our specialized Databricks DevOps Training is designed to transform your existing data practitioners into highly capable platform architects. We don’t teach basic Spark; we teach enterprise deployment mechanics.

During our workshop, your team will master:

- Transitioning legacy workflows into Databricks Asset Bundles.

- Designing automated, highly resilient CI/CD pipelines.

- Implementing absolute environment isolation using Service Principals.

- Wiring Unity Catalog directly into the deployment lifecycle for unshakeable governance.

Stop deploying by clicking and hoping for the best. Book a discovery call today to customize our Databricks DevOps Training for your team’s specific technology stack, and start treating your data platform with the engineering rigor it deserves.