You invested in a cutting-edge Databricks ecosystem to achieve unprecedented scale and velocity. Yet, underneath the hood of many enterprise deployments, engineers are still manually clicking through the UI to configure clusters, assign permissions, and provision workspaces.

This „ClickOps” approach creates an immediate ceiling on your data platform’s potential. It leads to severe configuration drift, where your Development, Staging, and Production environments slowly morph into entirely different beasts.

Security patches get missed, deployments become a harrowing bottleneck, and platform stability becomes dangerously reliant on the tribal knowledge of one or two senior engineers.

To truly unlock the advanced capabilities of a modern data ecosystem, infrastructure must be treated as software. It is time to bridge the gap between Data Engineering and DevOps.

How Most Companies Get Databricks Infrastructure Wrong

Many organizations fundamentally misunderstand what Databricks is capable of. They treat it merely as a basic Spark cluster hosted in the cloud, completely missing the enterprise-grade orchestration and governance features available to them.

The Manual Workspace Trap

When workspaces are spun up manually, they exist as isolated silos rather than governed nodes within an enterprise architecture. Every new project requires a DevOps engineer to manually configure networks, instance profiles, and storage layers. This is not only incredibly slow, but it guarantees human error.

Hardcoded Governance & Security Blind Spots

Governance cannot be an afterthought. Relying on administrators to manually grant table access or configure row-level permissions creates an auditing nightmare. When security is handled through the UI, there is no version history, no peer review, and no way to instantly roll back a disastrous permission change.

The Infrastructure vs Data Pipeline Disconnect

We frequently see organizations that boast strict CI/CD for their data pipelines, yet leave the underlying infrastructure entirely out of the loop. When a data pipeline inevitably fails in Production, teams waste days trying to determine if it was bad code, or simply a misconfigured, manually-tweaked cluster.

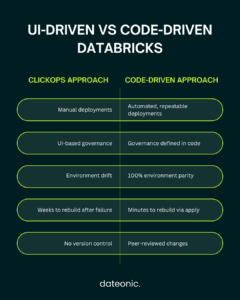

The Contrast: UI-Driven vs Code-Driven Architecture

| Feature | The „ClickOps” Approach | The Dateonic Way (Terraform + DABs) |

|---|---|---|

| Deployments | Manual, slow, and error-prone. | Automated, repeatable, and instantaneous. |

| Governance | UI-based grants; impossible to audit easily. | Unity Catalog defined purely in Terraform state. |

| Environment Parity | Dev/Stage/Prod constantly drift apart. | 100% identical environments enforced by code. |

| Disaster Recovery | Rebuilding takes weeks of guesswork. | Rebuilding takes minutes via terraform apply. |

The Dateonic Way: 100% Code-Driven Databricks Architecture

At Dateonic, we architect platforms where the UI is strictly read-only for administrators. Everything from the root workspace down to the specific column-level permissions is defined in code.

Governing Unity Catalog Through Terraform

In a mature architecture, Data Governance is a first-class citizen. We advocate for managing Unity Catalog including catalogs, schemas, external locations, and strict row/table-level privileges, entirely via Terraform state files. This approach guarantees that your data security policies are peer-reviewed, version-controlled, and completely immune to UI-based permission drift.

The Perfect Pairing: Terraform + Databricks Asset Bundles (DABs)

A common architectural mistake is trying to force Terraform to manage everything, including daily data jobs. The „Right Way” requires a strict dividing line:

- Terraform handles the foundational, slow-moving infrastructure: Workspaces, networking, Unity Catalog setup, and instance profiles.

- Databricks Asset Bundles (DABs) handle the fast-moving workload CI/CD: Data jobs, DLT pipelines, and ML models.

Getting this architectural boundary right is the key to creating a scalable, frictionless developer experience.

Show, Don’t Tell: Automated Deployments in Action

We believe in showing, not just telling. Check out our public GitHub repository (Dateonic/Databricks-Asset-Bundles-tutorial) for production-grade examples of what we teach. You can explore exactly how to structure your IaC and DABs repositories for enterprise scale.

https://www.youtube.com/@dateonic

Watch our Architects demonstrate an end-to-end infrastructure deployment using Terraform and Databricks Asset Bundles.

Why You Can’t Just Hire Your Way Out of This

The reality of the modern data landscape is that true Databricks experts are exceedingly rare. Finding a „unicorn” engineer who possesses both deep architectural knowledge of the Databricks ecosystem and advanced Terraform state management experience is a prolonged, expensive hunt.

The smartest and most cost-effective strategy is to leverage the talent you already have. Your current Data and Cloud engineers already understand your business logic. By upskilling your existing internal team, you bypass the talent shortage entirely and build a self-sustaining engineering culture.

Our Databricks & Terraform Training Workshop

Our specialized Databricks & Terraform Training is designed specifically to fix these architectural gaps and turn your existing team into platform experts.

From Manual Clicks to Automated Infrastructure in Days

We don’t just teach your team basic syntax. We teach them the Dateonic philosophies of architectural best practices. Your engineers will learn „The Right Way” to structure repositories, manage state, and build a resilient data ecosystem.

What Your Team Will Actually Build

During the workshop, your team will achieve tangible, production-ready outcomes, including:

- Writing robust Terraform providers specifically tailored for Databricks.

- Automating Unity Catalog grants and securing external storage locations via code.

- Bootstrapping secure, networked workspaces from scratch.

- Integrating Infrastructure as Code into your existing CI/CD pipelines (such as GitHub Actions or Azure DevOps).

Stop Managing Your Platform by Hand

Manual configurations and fragmented governance are preventing your platform from reaching its true scale. It’s time to enforce strict, code-driven architecture across your entire data ecosystem.

Ready to eliminate configuration drift and turn your infrastructure into a competitive advantage? Book a discovery call with our Architects today to customize a Databricks & Terraform Training program for your engineering team.