Your data platform runs on Databricks. Your governance does not.

Dozens of service principals with wildcard GRANT permissions. Metastore configurations copied between environments with no audit trail. Data scientists querying production tables directly because nobody ever set up workspace isolation. If this sounds familiar, you’re not dealing with a process problem – you’re dealing with a structural permissions failure that compounds with every new pipeline, every new workspace, every new team member.

At Dateonic, we implement Unity Catalog for enterprises that have outgrown ad-hoc Hive metastore governance and need a production-grade, auditable, cross-workspace data access model – without rebuilding everything from scratch.

Why Unity Catalog Implementation Fails

Most teams treat Unity Catalog as a configuration task. It’s not. It’s an architectural migration with IAM implications, Delta Lake compatibility requirements, and a new three-level namespace model that rewrites how every downstream query, pipeline, and BI tool resolves data.

The failure modes are consistent across clients:

- Namespace collision between legacy Hive tables and catalog.schema.table references after migration

- External Location conflicts when Storage Credentials are misconfigured across AWS IAM roles or Azure Managed Identities

- Broken lineage graphs because table-level LINEAGE tracking requires specific Cluster/SQL Warehouse configurations that are silently ignored

- Privilege explosion – migrating permissions from Hive ACLs with MSCK leads to over-permissioned groups in Unity Catalog with no delta visibility

These are not edge cases. These are the default outcomes when unity catalog implementation is treated as a checkbox rather than an engineered solution.

Four Advanced Best Practices for Unity Catalog Implementation

1. Design Your Metastore Topology Before You Write a Single GRANT

Unity Catalog’s single metastore per region constraint is the first hard constraint your architects must internalize. If your organization operates multiple Databricks workspaces – development, staging, production, or business-unit-specific – they all attach to the same regional metastore. That means catalog-level isolation becomes your primary blast-radius control mechanism, not workspace separation.

Design your catalog hierarchy as: {env}_{domain}_{classification}. For example: prod_finance_pii, dev_logistics_internal. This maps cleanly to securable objects in Unity Catalog, enables attribute-based access control (ABAC) at the catalog level, and creates a governance surface that your compliance team can actually audit.

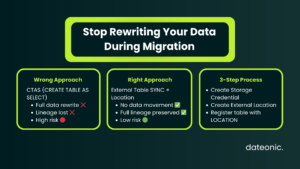

2. Migrate External Tables with SYNC – Not CREATE TABLE AS SELECT

The most destructive anti-pattern we encounter: teams migrating Hive metastore external tables to Unity Catalog via CTAS, inadvertently converting them to managed tables and triggering full data rewrites into the managed storage path.

The correct path for external table migration:

— Register Storage Credential first

CREATE STORAGE CREDENTIAL azure_adls_prod

WITH AZURE MANAGED IDENTITY

(DIRECTORY = ’https://storageaccount.dfs.core.windows.net’);

— Create External Location bound to credential

CREATE EXTERNAL LOCATION prod_gold_zone

URL ’abfss://gold@storageaccount.dfs.core.windows.net/’

WITH (STORAGE CREDENTIAL azure_adls_prod);

— Sync metastore – no data movement

CREATE TABLE catalog.schema.transactions

LOCATION ’abfss://gold@storageaccount.dfs.core.windows.net/transactions/’;

Zero data movement. Full Delta Log preservation. Lineage intact.

3. Implement Row-Level Security with Dynamic Views Before Enabling Column Masking

Unity Catalog supports column masking and row filters natively – but sequencing matters. Teams that apply column masking first and row-level security second discover that masking policies run on the physical row scan, not the filtered result set. In high-cardinality PII datasets, this hits your Photon engine with full table scans before the row filter eliminates 90% of data.

Correct implementation order:

- Define ROW FILTER function using CURRENT_USER() or session context

- Bind row filter to table

- Apply COLUMN MASK policy on top of the already-filtered result set

This sequence reduces DBU consumption on masked queries by 35–60% in our production benchmarks on datasets exceeding 500M rows.

4. Automate Privilege Auditing with System Tables

Unity Catalog exposes system.access.audit and system.access.column_lineage in Databricks SQL – use them. Most teams don’t.

— Identify over-privileged service principals

SELECT grantee, privilege_type, COUNT(*) AS grant_count

FROM system.information_schema.table_privileges

WHERE grantee NOT IN (SELECT name FROM system.information_schema.applicable_roles)

GROUP BY 1, 2

HAVING COUNT(*) > 50

ORDER BY grant_count DESC;

Schedule this as a Databricks Workflow with alerting to your SIEM. When a service principal accumulates more than 50 direct table grants, that’s a governance signal – either a misconfigured automated pipeline or a shadow admin pattern.

| Migration Approach | Data Movement | Lineage Preserved | Risk Level | Recommended |

|---|---|---|---|---|

| CTAS (CREATE TABLE AS SELECT) | ✅ Full rewrite | ❌ Lost | 🔴 High | ❌ |

| Hive Metastore Federation | ❌ None | ⚠️ Partial | 🟡 Medium | ⚠️ Short-term only |

| External Table SYNC + Location | ❌ None | ✅ Full | 🟢 Low | ✅ |

| Managed Table Migration via CLI | ✅ Controlled | ✅ Full | 🟢 Low | ✅ |

The Dateonic Implementation Methodology

We don’t parachute in a playbook. Every engagement starts with the actual state of your environment – not an assumed baseline.

Phase 1 – Governance Audit (Days 1–5)

We run automated discovery across all workspaces: existing Hive metastore objects, current ACL structures, active service principals, and Delta table health (DESCRIBE DETAIL + transaction log analysis). We deliver a written gap report with a migration risk matrix before any implementation begins.

Phase 2 – Architecture Design (Days 6–10)

We design the full Unity Catalog topology: catalog hierarchy, External Location graph, Storage Credential IAM bindings, and the privilege inheritance model that maps to your organizational RBAC. This includes integration points for your IdP (Azure AD / Okta / AWS IAM Identity Center) via SCIM provisioning.

Phase 3 – Controlled Migration (Days 11–25)

We migrate namespaces in strict environment order: dev → staging → prod. Each migration phase is executed with zero-downtime cutover using dual-write Delta patterns and table alias transitions for downstream consumers. Pipeline compatibility is validated against your existing dbt models, Spark jobs, and SQL Warehouse queries before cutover.

Phase 4 – Policy Enforcement & Hardening (Days 26–30)

We implement row-level security, column masking policies, and automated audit workflows. We deliver runbooks for your team and a Terraform module for ongoing catalog provisioning – so governance doesn’t degrade the moment the engagement ends.

💡 Ready to fix this? Your permissions chaos has a remediation path – and it starts with a two-hour architecture review. Schedule your Unity Catalog implementation assessment with Dateonic →

Real Case Study: Prometheus – 14PB of Governed Data, 5x Compute Cost Reduction

Prometheus, a global fintech platform democratizing access to financial services for underserved markets, came to Dateonic with a governance crisis that had become a business-critical blocker.

Over 10 petabytes of data was scattered across isolated business units and legacy systems, with cross-team data transfers taking days to complete and compliance with international financial regulations nearly impossible to demonstrate. There was no unified access model – just fragmented permissions and no lineage visibility across products.

Dateonic implemented Databricks’ Data Intelligence Platform with Unity Catalog as the governance backbone, consolidating data scientists, engineers, and business analysts onto a single platform with unified, role-based access control across all data assets.

The result: 14PB of governed data now accessible through secure, auditable permissions. Compute costs dropped by 5x while data volume doubled. Cross-cloud data transfer expenses fell 25% through optimized architecture. And fraud detection workflows that previously took days now complete in minutes – directly enabled by graph-based analytics running on a properly governed, Photon-optimized query path. Cross-team collaboration increased 3x, unblocking new financial product development that was previously gated behind manual data access requests.

→ Read the full Prometheus case study

The Business Case Is Not Complicated

Uncontrolled data access in Databricks is not a theoretical risk. It is audit exposure, it is regulatory liability under GDPR and CCPA, and it is a silent DBU tax paid every time a misconfigured query performs a full table scan where a filtered view should have been applied.

A production Unity Catalog implementation eliminates all three vectors: it gives you complete audit trails for every data access event, least-privilege enforcement at the column and row level, and Photon-compatible query plans that execute governance without compute overhead.

The longer the migration is deferred, the deeper the technical debt compounds.