Migrating to a modern data platform is the easy part; operating it like a tech giant is where most enterprises stumble. You don’t adopt Databricks just to run legacy pipelines slightly faster in the cloud, you adopt it to build an autonomous, governed, and highly scalable data ecosystem.

But bridging the gap between installation and innovation requires more than just a license.

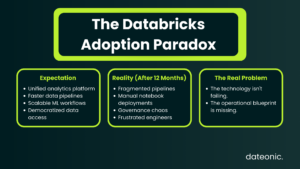

The Adoption Paradox

Leadership signs off on a Databricks enterprise contract expecting an immediate revolution in data velocity. The vision is clear: unified analytics, seamless machine learning pipelines, and democratization of data. Yet, a year later, many organizations find themselves facing the „Adoption Paradox.” The platform feels like a complex, fragmented silo rather than a unified engine for growth.

The bottleneck is rarely the technology itself. Instead, the friction comes from a lack of a cohesive operational blueprint. Adopting a comprehensive platform requires a unified, strategic mindset. Without end-to-end Databricks enablement, data teams end up fighting the platform’s architecture instead of leveraging its untapped potential, leading to delayed time-to-market and frustrated engineers.

True enablement isn’t about handing over a link to the official documentation. It is about architecting for scale from day one and equipping your core engineering team to master the ecosystem’s advanced capabilities.

The Problem: Why „Ad-Hoc” Adoption Fails

When companies treat Databricks implementation as a purely technical migration rather than a paradigm shift, they inadvertently sabotage their own investments. This usually manifests in three critical failure points:

1. The Ecosystem Gap: Treating Databricks Like Legacy Spark

Many organizations simply lift-and-shift their old Apache Spark jobs into Databricks workspaces. They pay for a premium enterprise data platform but treat it as a basic, ephemeral compute cluster. By doing so, they completely bypass native orchestration, Delta Live Tables (DLT), Serverless compute, and MLflow.

2. The „Hire a Unicorn” Fallacy

True Databricks experts are exceptionally rare. Companies frequently stall their platform maturity by spending six to eight months trying to hire a „Principal Databricks Architect” who magically understands every nuance of data engineering, MLOps, and cloud infrastructure. By the time you hire this elusive expert, the business has already lost a year of competitive advantage.

3. Governance as an Afterthought

Setting up isolated workspaces with fragmented, workspace-local metastores and messy IAM roles is a recipe for disaster. When security audits hit, or cross-team collaboration becomes necessary, retrofitting governance over a tangled web of ad-hoc permissions becomes an expensive, high-risk nightmare.

The Dateonic Way: Architecting the „Right Way” from Day One

To break free from these anti-patterns, Dateonic’s platform consulting steps in to establish architectural best practices. We transition teams from reactive patchwork to proactive engineering.

| Feature | The „Ad-Hoc” Way | The Dateonic Way |

|---|---|---|

| Governance | Workspace-local metastores, messy IAM roles | Unity Catalog as the bedrock, centralized access control |

| Deployments | Manual notebook runs, UI-based job creation | Strict CI/CD pipelines, Infrastructure as Code |

| Data Processing | Basic Spark clusters, disjointed scripts | Delta Live Tables (DLT), automated pipeline orchestration |

| Expertise | Waiting months to hire external unicorns | Upskilling internal teams who already know the business logic |

Unity Catalog as the Bedrock

Governance cannot be an add-on. Proper end-to-end Databricks enablement requires treating data governance as a first-class citizen. We implement Unity Catalog to establish centralized, cross-workspace governance.

This enables granular row- and table-level privileges, isolated team environments, and a single source of truth that satisfies stringent security audits while empowering data scientists to explore safely. Read more about our approach in our Unity Catalog Governance Guide.

Standardized Developer Workflows (CI/CD Done Right)

An advanced data platform is only as fast as its deployment pipeline. If your deployment requires an engineer to manually upload a notebook and click „Run,” your architecture is already a legacy system.

We enforce strict CI/CD pipelines, transitioning teams to treat data infrastructure as code using Databricks Asset Bundles (DABs). This ensures highly reproducible, version-controlled, and tested deployments across dev, staging, and production environments.

Show, Don’t Tell: Enabling CI/CD with Asset Bundles

We believe in showing, not just telling. Check out our public GitHub repository (Dateonic/Databricks-Asset-Bundles-tutorial) for production-grade examples of what we teach.

To see these principles in action, watch our technical breakdown on transitioning away from manual deployments:

Databricks Asset Bundles – Hands-On Tutorial. Part 1 – running SQL and python files as notebooks.

Caption: Stop Deploying Manually: Mastering Databricks Asset Bundles for Production CI/CD.

The Training Solution: Building Expertise from Within

The talent reality is stark: hunting for external Databricks unicorns is slow and expensive. The smartest, most effective move is to empower the engineers you already have. Your internal team already understands your unique business logic and domain nuances; Dateonic provides the catalyst to turn them into platform experts.

Our End-to-End Databricks Enablement Training engagement is designed to fix architectural gaps and elevate your team’s capability simultaneously:

- Phase 1: Platform Architecture Review: We align your current infrastructure with the „Right Way,” auditing your networking, security, and establishing a Unity Catalog migration strategy.

- Phase 2: Hands-On Upskilling: We conduct intensive, highly customized workshops focusing on advanced Delta Lake optimization, streaming architectures, and full-lifecycle CI/CD implementation.

- Phase 3: Co-Delivery & Enablement: We don’t just teach and leave. We work alongside your team to build the first production-ready pipelines using DABs and DLT, ensuring total knowledge transfer and establishing internal champions.

Stop Searching for Unicorns. Start Building Them.

End-to-end Databricks enablement is the bridge between purchasing a world-class platform and actually driving measurable business value with it. It’s time to move past basic Spark clusters and manual deployments.

Let’s architect your platform for scale and turn your existing data engineers into absolute Databricks experts.

Ready to stop fighting your infrastructure? Book a Platform Architecture Review with our senior engineers today.