The silent killer of enterprise data platforms isn’t a lack of talent, it’s a lack of standardization.

Your team consists of smart engineers. But if one engineer deploys via „Click-Ops” in the UI, another scripts in notebooks, and a third attempts a custom CI/CD pipeline, you don’t have a platform. You have technical debt compounding daily.

This leads to fragile pipelines, security loopholes in Unity Catalog, and deployments that break production on Friday afternoons.

Enterprise Databricks Training is not about teaching your team how to write Python or SQL – they likely already know that. It is about instilling a rigorous software engineering discipline specific to the Data Lakehouse. It is the bridge between „it works on my machine” and „it scales in production.”

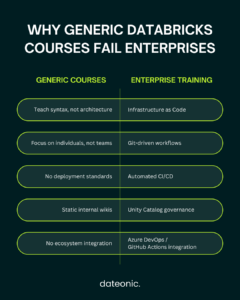

Why „DIY” and Generic Courses Fail the Enterprise

Many organizations attempt to solve the skills gap with generic Udemy subscriptions or internal wikis. Here is why those approaches fail at the architectural level:

- Individual Syntax vs Team Architecture: Generic courses target individuals. They teach the syntax of PySpark, but they ignore the architecture of a secure deployment. They don’t teach how to manage state, handle secrets, or structure a repository for a team of ten.

- The „Wiki” Trap: Internal wikis are static. The moment you write a „How to Deploy” guide, it becomes obsolete. This forces new hires to rely on tribal knowledge, leading to Shadow IT where every engineer builds their own version of the truth.

- The Context Gap: A generic course won’t teach you how to integrate Databricks with your specific ecosystem (e.g., Azure DevOps, GitHub Actions, or AWS CodePipeline).

To build a resilient platform, you need training that treats your data pipelines like mission-critical software.

What a Modern Enterprise Curriculum Must Cover

A strategic training program must move your team away from manual UI configuration and toward a code-first, automated mindset. At Dateonic, our curriculum is built around the modern Databricks stack.

1. Infrastructure as Code (IaC) & Terraform

Manual configuration is a disaster recovery nightmare. Modern training must cover provisioning workspaces, clusters, and Unity Catalog permissions using Terraform.

- The Goal: If a workspace is deleted today, your team should be able to re-provision it by tomorrow morning with a single command.

- The Skill: Moving from „clicking buttons” to defining infrastructure in .tf files.

2. The Shift to Databricks Asset Bundles (DABs)

The era of the proprietary dbx tool or simple Repos API is fading. The standard for the future is Databricks Asset Bundles (DABs).

- We focus heavily on the CLI-driven workflow that Architects demand.

- We teach how to define job definitions, pipelines, and ML experiments in YAML, ensuring they are version-controlled alongside your code.

3. True CI/CD for Data Pipelines

It is not enough to just write code; you must ship it safely. Enterprise training must implement a robust CI/CD lifecycle using tools like Azure DevOps or GitHub Actions.

- Unit Testing: Testing logic before it hits the cloud.

- Integration Testing: Automated validation in a staging environment.

- Promotion: Gated releases from Dev -> Staging -> Prod.

| Module | Why It Matters | Practical Outcome |

|---|---|---|

| Terraform & IaC | Eliminates manual setup | Rebuild environments on demand |

| Databricks Asset Bundles | Standardizes deployment | YAML-defined jobs & pipelines |

| Git Version Control | Enables collaboration | Clean repo structure |

| CI/CD Automation | Prevents broken releases | Dev → Staging → Prod gates |

| Testing Strategy | Reduces risk | Unit + integration validation |

| Secrets & State Management | Prevents security gaps | Secure production workflows |

See It in Action: The Dateonic Standard

We believe in „Show, Don’t Tell.” We don’t just lecture on theory; we provide your team with the actual starter kits and code patterns they need to start building immediately.

The Dateonic Repository

We don’t just talk about code quality; we publish it. Check our public GitHub repository Dateonic/Databricks-Asset-Bundles-tutorial. This repo demonstrates the exact production-grade templates and folder structures we teach your team to master.

Watch the Workflow

See how a trained engineer moves from a local IDE to a deployed production job in minutes using DABs:

https://www.youtube.com/@dateonic

Watch our experts explain the Databricks Asset Bundles workflow in depth.

The ROI of Specialized Databricks Consulting & Training

Investing in specialized training is an infrastructure investment. The ROI is measurable in three key areas:

- Speed to Market: When new hires don’t have to reinvent the deployment wheel, they push code weeks faster. A standardized „Golden Path” for development removes friction.

- Governance & Security: Implementing Unity Catalog correctly the first time prevents a costly refactoring project six months down the line. Security becomes code, not a checklist.

- Retention: Top-tier data engineers want to work with modern tools. Providing high-quality, architectural training is a retention tool that signals you are serious about engineering excellence.

Conclusion: Stop Building Tech Debt

If your team is still deploying manually or struggling with inconsistent environments, you are paying a tax on every single release.

Enterprise Databricks Training standardizes your workflow, secures your platform, and turns your data team into a software engineering unit.

Ready to standardize your Data Platform?

Don’t waste months on trial and error. Give your team the templates, tools, and training they need to build right the first time.

Discussion includes: Current bottlenecks, Terraform implementation, and a custom syllabus walk-through.