Databricks is a proven, unified data intelligence platform – but the path from adoption to full value is rarely straightforward. Many organizations struggle to realize expected ROI not because the platform falls short, but because of avoidable databricks implementation challenges that surface early and compound over time.

In this article, I walk through the six most common databricks implementation issues that teams encounter – and what experienced implementation partners do differently to prevent them from derailing a project.

Challenge 1 – Skipping the Data Foundation

One of the most frequent mistakes organizations make is jumping straight into machine learning and AI workloads before the descriptive data layers beneath them are mature and reliable. The appeal is understandable – ML/AI is where the excitement lives – but the consequences of rushing this stage are significant.

Symptoms of a premature leap to advanced analytics include:

- Basic business metrics (revenue, units sold, user counts) returning inconsistent results

- Predictive models trained on unreliable source data producing unreliable outputs

- Teams spending engineering time firefighting data quality issues instead of building new capabilities

- Delayed time-to-value and costly rework cycles

If fundamental metrics like sales figures aren’t accurate and consistent, any machine learning model built on top of them will inherit and amplify those errors. The foundation has to be solid first.

How Dateonic approaches this: Dateonic’s certified data engineers establish a solid lakehouse architecture before advanced workloads are introduced. The layered Bronze / Silver / Gold approach ensures that descriptive analytics are reliable before any predictive or prescriptive layer is built on top.

Challenge 2 – Uncontrolled Data Flow & Poor Governance

Without a clearly defined path from source to target, data lineage becomes ambiguous and environments quickly become ungoverned. This is one of the most insidious databricks implementation challenges because it often goes undetected until a compliance audit or a data incident forces the issue to the surface.

Common governance failure modes include:

- Data lineage that is partial or entirely absent, making debugging and auditing extremely difficult

- Unity Catalog implemented too late – or skipped entirely in self-managed migrations

- Row-level access controls and deletion capabilities not configured correctly

- GDPR and CCPA compliance risks stemming from the inability to honor data subject deletion requests

Unity Catalog is Databricks’ native solution for unified data governance across clouds and workspaces. When it isn’t set up correctly from the beginning, retrofitting it into an existing environment is far more disruptive than building it in from day one.

How Dateonic approaches this: Unity Catalog implementation is a standard part of every Dateonic delivery – not an optional add-on. The team audits governance gaps during the initial assessment phase, long before any data begins flowing through production pipelines.

Challenge 3 – Migration Complexity from Legacy Systems

Moving from Hadoop, Teradata, or proprietary SQL environments to Databricks is far more complex than a simple lift-and-shift. Many organizations discover this only after migration work has already begun – at which point correcting course is expensive.

The most common databricks migration challenges encountered during legacy transitions include:

- Broken or silently failing data pipelines that only surface in production

- Data inconsistencies between source and target environments

- Missed SLAs during and immediately after cutover

- Underestimated schema translation complexity between SQL dialects

- Hidden dependencies in legacy jobs that weren’t documented

The financial reality is equally sobering. Without expert guidance, organizations frequently find that the true total cost of a migration runs significantly higher than the original infrastructure budget – particularly when rework, extended parallel-run periods, and unplanned downtime are factored in.

How Dateonic approaches this: Dateonic brings a proven migration methodology to engagements across logistics, retail, energy, and financial services. Delta Live Tables and Photon are used as standard architecture components to ensure pipeline reliability and performance from the outset. See the Snowflake to Databricks migration case study and Dateonic’s Data Warehouse & Cloud Migration service for more detail.

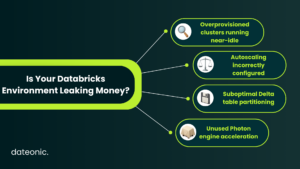

Challenge 4 – Cost Overruns from Cluster Mismanagement

Databricks cost management is one of the areas where DIY implementations most consistently underperform. Overprovisioned clusters and inefficient query patterns quietly drain Databricks Unit (DBU) spend – and because the waste is gradual, it often goes unnoticed for months.

The most frequent culprits behind runaway DBU consumption include:

- Clusters sized for peak load running near-idle during off-peak hours

- Autoscaling configured incorrectly or not enabled at all

- Data skew causing certain tasks to run far longer than others

- Suboptimal partitioning strategies on large Delta tables

- Photon engine not enabled on jobs that would benefit from it most

These inefficiencies compound over time. A workload that could complete in 20 minutes with proper configuration may routinely take over an hour – and the difference in DBU cost is substantial at scale.

How Dateonic approaches this: Cluster optimization and cost governance are built into Dateonic engagements from day one, not added as an afterthought. For a deeper look at the technical approach, see the guide on optimizing clusters in Databricks and the architect’s guide to reducing DBU consumption.

Challenge 5 – Orchestration & Pipeline Sprawl

As data sources and use cases multiply, so does pipeline complexity. Without a deliberate orchestration strategy, the number of jobs grows faster than the ability to monitor and maintain them – and when something breaks, it may do so silently.

Signs that orchestration has become a problem:

- Jobs failing without alerting downstream consumers or on-call engineers

- No clear ownership over who is responsible for individual pipelines

- A patchwork of native Databricks Workflows and external tools that don’t coordinate cleanly

- Dependency chains between jobs that are undocumented or poorly understood

Teams often treat orchestration as a detail to be resolved later. By the time pipeline sprawl becomes obvious, refactoring it is a significant engineering undertaking.

How Dateonic approaches this: Orchestration design is addressed upfront as part of the implementation architecture. Whether native Databricks Workflows or an external orchestrator is the right fit depends on the specific environment – and that decision is made deliberately during the design phase, not discovered reactively after deployment.

Challenge 6 – Internal Skill Gaps

Databricks is a sophisticated platform that demands strong foundational knowledge across data engineering, Apache Spark, cloud infrastructure, and increasingly MLflow and Unity Catalog. The learning curve is real – and organizations often underestimate it when planning implementations.

Where skill gaps most frequently appear:

- Spark tuning and understanding distributed execution behavior

- Unity Catalog’s permission model and metastore architecture

- MLflow experiment tracking and model registry management

- Delta Lake internals – time travel, OPTIMIZE, VACUUM, and Z-ordering

Hiring certified Databricks engineers on the open market is competitive and time-consuming. Many organizations find themselves in a position where implementation work has begun before the right expertise is in place – meaning early design decisions are made without the full picture.

How Dateonic approaches this: Dateonic operates with a fully certified team of Databricks data engineers and runs the Databricks Community Meetup in Poland – an indicator of sustained, deep platform engagement beyond client work. For organizations weighing how to source expertise, the comparison between working with a Databricks partner vs. a freelancer is worth reading before making a decision.

| Implementation Challenge | The Common Symptom | The Dateonic Approach |

|---|---|---|

| Skipping Data Foundation | Firefighting bad data instead of building AI | Establishing Bronze/Silver/Gold Lakehouse architecture upfront |

| Poor Governance | Unclear lineage, GDPR compliance risks | Unity Catalog implemented from Day 1 |

| Migration Complexity | Broken pipelines during legacy cutover | Using Delta Live Tables & Photon for reliable pipelines |

| Cost Overruns | Unnoticed, runaway DBU consumption | Baking cost governance and cluster optimization into the initial design |

| Pipeline Sprawl | Jobs failing silently with no clear ownership | Deliberate orchestration architecture (Native Workflows vs. External) |

The Common Thread: These Are Planning Failures, Not Platform Failures

Looking across all six challenges, a clear pattern emerges: none of them are caused by limitations in the Databricks platform itself. Databricks is trusted by thousands of organizations globally, and the platform’s capabilities are well-established across industries from financial services to manufacturing.

What these challenges share is that they are all downstream consequences of how implementation is planned – or not planned. Governance that isn’t considered at the outset becomes a compliance risk. Clusters that aren’t sized correctly from the start become a cost problem. Migrations that aren’t architected carefully become rework.

Expert-guided, phased implementations consistently avoid these pitfalls – not because they use different technology, but because they apply structured decision-making at the points where mistakes are most likely and most costly.

Work With Dateonic

Dateonic is an official Databricks consulting partner with a fully certified team of data engineers. End-to-end services span strategy, migration, Unity Catalog, MLflow, AI/ML, and ETL/ELT optimization – across logistics, retail, energy, and financial services.

Planning a Databricks implementation? Talk to Dateonic’s certified engineers before you start – not after the first problems appear.