Deploying a large language model against internal enterprise data is a fundamentally different engineering problem than deploying one against public corpora. The model’s pre-training weights contain no proprietary context – regulatory filings, internal API schemas, clinical trial documentation – and naive approaches that simply concatenate document text into a prompt window do not scale beyond a proof-of-concept.

Production systems require a robust, agentic Retrieval-Augmented Generation (RAG) architecture with governed data access and high-quality data pipelines, not just a wrapper library.

The most common architectural bottleneck observed in enterprise RAG initiatives is the absence of a secure, auditable, and highly optimized retrieval layer. Teams typically begin by connecting an orchestration framework directly to a vector store, without row-level access controls, without proper PDF/unstructured data parsing, and without a deterministic audit trail of which documents influenced a model response. In regulated industries – pharma, financial services, enterprise IT – this constitutes a compliance gap, not merely a technical limitation.

The Architectural Problem: How to Connect an LLM to a Private Database

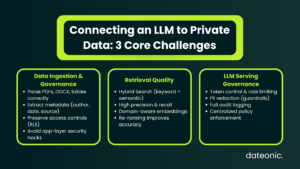

When the engineering goal is to connect an LLM to a private database or corporate knowledge base, the system must solve three independent sub-problems simultaneously.

1. Secure, Governed Data Ingestion and Parsing

Documents from SharePoint, Confluence, SQL data warehouses, or proprietary data lakes must be ingested, parsed, chunked, embedded, and persisted. Raw files (like PDFs or DOCX) are now managed via Unity Catalog Volumes. A high-quality data pipeline must not only extract text but also preserve document layout, tables, and extract metadata (e.g., author, source, date) to enrich the chunks.

Crucially, the original access controls must be preserved and propagated. A user in the finance-read-only group must not retrieve documents tagged for legal-exec-only at query time. Without Unity Catalog governing both the raw volumes and the vector index as a first-class catalog asset, this access control layer is typically implemented ad-hoc in application code, introducing massive desync risk.

2. High-Fidelity, Low-Latency Retrieval

The semantic quality of retrieval – precision and recall of relevant chunks – is determined by the data pipeline quality, the embedding model, and the retrieval strategy. Enterprise documents often require Hybrid Search (combining keyword/BM25 search with semantic vector search) and a Re-ranking step to handle highly specific domain language.

Mosaic AI Vector Search on Databricks provides a managed, serverless vector index with Delta Sync, meaning the vector store stays in sync with the upstream Delta Lake table automatically. This eliminates the operational overhead of managing a separate vector database and removes the embedding-pipeline-to-vector-store desync as a class of failure.

3. LLM Query Routing and Prompt Governance

Even after retrieval is solved, the LLM serving layer must enforce token budgets, utilize AI Guardrails to redact PII from retrieved context before it enters the prompt, log every inference call for auditability, and rate-limit by department or use case.

Mosaic AI Gateway provides a governed proxy layer in front of any LLM endpoint – whether it is a Databricks-served foundation model (e.g., DBRX, Llama 3), a BYOM fine-tuned checkpoint registered in MLflow, or an external API endpoint (OpenAI, Anthropic). Guardrails, rate limits, and input/output payload logging are configured centrally at the Gateway level, not embedded redundantly in application code.

The Databricks-Native RAG Stack: A Reference Architecture

Architecture layers (bottom-up):

- Data Sources: Delta Lake tables, SharePoint, S3/ADLS (governed via Unity Catalog Volumes for unstructured data).

- Ingestion & Parsing Pipeline: Databricks serverless pipelines utilizing unstructured parsing libraries to extract text, tables, and metadata, followed by chunking and embedding generation.

- Governed Vector Index: Mosaic AI Vector Search with Delta Sync, governed by Unity Catalog with column-level, row-level security, and metadata-filtering capabilities.

- Retrieval Layer: A Databricks SQL UDF or Python function calling the Vector Search API, implementing Hybrid Search and optionally a Cross-Encoder Re-ranker.

- LLM Serving: Mosaic AI Model Serving (foundation models, BYOM, or external endpoints routed via AI Gateway).

- Application Layer: Databricks-hosted RAG Chain or Agent (logged as an MLflow Pyfunc model and served via a REST endpoint).

- Observability & Evaluation: Mosaic AI Agent Evaluation (LLM-as-a-judge frameworks) assessing retrieval quality and generation accuracy against an MLflow Evaluation Dataset, backed by Lakehouse Monitoring.

Delta Sync: Eliminating Embedding Pipeline Desync

The most persistent operational bottleneck in self-managed RAG systems is embedding drift – the vector index falls behind the source document store because re-indexing jobs are batch-scheduled rather than event-driven.

Mosaic AI Vector Search resolves this natively via Delta Sync, which monitors the upstream Delta table’s transaction log and triggers incremental re-embeddings on row-level changes.

This is architecturally significant for regulated data environments. A compliance document updated on Monday is retrievable by Tuesday morning in a Delta-Sync pipeline; in a weekly-batch pipeline, it may be invisible to the LLM for seven days.

The business risk of that desync window is non-trivial in environments subject to FDA 21 CFR Part 11, MiFID II, or SOX.

| Feature | Batch Pipeline | Delta Sync |

|---|---|---|

| Update Frequency | Scheduled (daily/weekly) | Continuous |

| Data Freshness | Delayed | Near real-time |

| Operational Complexity | High | Low (managed sync) |

| Risk of Drift | High | Minimal |

| Compliance Readiness | Weak | Strong |

| Maintenance | Manual re-indexing | Automated |

Unity Catalog as the Access Control Backbone

A critical design principle for enterprise RAG: access control must be enforced at the data layer, not the application layer. When vector search queries are issued, Unity Catalog evaluates the caller’s permissions against the catalog-registered vector index and the underlying Delta table.

Row-level security (RLS) filters propagate from the source table to the vector index, meaning a query from a pharma-clinical-readonly identity will not surface documents tagged legal-exec-confidential, regardless of semantic similarity. This eliminates the need for application-side document filtering – a pattern that introduces both correctness risk and maintenance debt.

Architectural Guidance: Designing a production-grade system to connect an LLM to a private database in a regulated environment? Explore our Enterprise RAG on Databricks solution page to see how Dateonic implements these patterns with Unity Catalog governance, Delta Sync, and AI Gateway out of the box.

Common Architectural Questions

How do you connect an LLM to a private database without exposing sensitive data outside the network perimeter?

Mosaic AI Model Serving, Vector Search, and Unity Catalog all operate within a customer’s Databricks workspace, which resides inside a private VPC (or vNet on Azure). No document content, retrieval context, or prompt data needs to leave the network perimeter if the LLM endpoint is also workspace-hosted.

For external endpoints, traffic is routed over a private endpoint, with AI Gateway acting as the sole egress point, logging every payload to a Delta Lake table for audit and applying AI Guardrails to strip PII before transmission.

How does Unity Catalog handle vector access controls for multi-tenant enterprise RAG?

Unity Catalog enforces row-level security (RLS) on the source Delta table, and that policy is natively inherited by the Mosaic AI Vector Search index. At query time, the Vector Search API call is evaluated under the authenticated user’s or service principal’s identity.

Unity Catalog applies applicable RLS predicates and filters results before they are returned. Therefore, a multi-tenant RAG deployment does not require spinning up separate vector databases per department; logical isolation is handled securely by the governance layer.

What is the recommended chunking and embedding strategy for domain-specific enterprise corpora?

The Databricks Generative AI Cookbook strongly advises moving beyond naive text splitters.

For high-quality pipelines:

- Parsing: Use robust parsers capable of understanding document structures (headers, tables, lists) to avoid splitting mid-sentence or mid-table.

- Chunking & Metadata: Extract document metadata (title, author, section header) and append it to each chunk’s text to provide the LLM with localized context.

- Embedding & Retrieval: Pair a state-of-the-art embedding model (like the latest GTE or BGE series available on Databricks Model Serving) with a Hybrid Search approach. For highly technical corpora (e.g., clinical study reports, patent documentation), adding a fine-tuned cross-encoder re-ranker yields measurable retrieval recall improvement. Always validate your chunking strategy against a held-out evaluation set using MLflow Agent Evaluation.

Conclusion

Connecting an LLM to a private enterprise knowledge base at production quality requires converging capabilities: a governed, auto-synced vector index (Mosaic AI Vector Search + Delta Sync), data-layer access control (Unity Catalog), advanced data parsing pipelines, and a secured LLM serving proxy (Mosaic AI Gateway). Naive architectures lack auditability, access governance, and the retrieval quality required for enterprise adoption.

The Databricks Lakehouse provides a vertically integrated path to a private RAG architecture where every component – from the raw PDF to the embedding pipeline, vector store, and inference log – is a governed, auditable, versioned, and continuously evaluated asset.

Ready to productionize your AI architecture? Contact Dateonic’s Engineering Team to scope a production-ready Enterprise RAG implementation on Databricks.