The question of whether to use Retrieval-Augmented Generation (RAG) or fine-tuning surfaces in virtually every Enterprise AI program reaching production readiness. Both approaches address fundamentally different architectural gaps, and conflating them – as many initial LLM pilots do – leads to costly redesigns at scale.

The naive approach – wrapping a base model in a LangChain pipeline and calling it a production RAG system – fails to account for data governance, latency budgets, model drift, and the operational overhead of maintaining knowledge freshness. At enterprise scale, in regulated environments, the architectural decision between RAG and fine-tuning must be treated as a first-class infrastructure decision, not an afterthought.

What RAG and Fine-Tuning Actually Solve

Before comparing them, it is essential to understand that RAG and fine-tuning address orthogonal failure modes. Treating them as interchangeable alternatives is the root cause of most misdirected AI investments.

RAG: Dynamic Knowledge Retrieval with Access-Controlled Context

RAG augments a base LLM’s response by retrieving relevant documents from an external store – typically a vector index – and injecting them into the model’s context window at inference time.

The critical architectural property is knowledge decoupling: the model’s parameters are not modified, and the knowledge corpus can be updated, versioned, or access-controlled entirely independently. This makes RAG the default architecture for use cases involving:

- Frequently changing knowledge (regulatory updates, product documentation, internal policies)

- Access-controlled content requiring row-level or document-level security (e.g., PII-segmented HR data, deal-specific financial records)

- Auditability requirements where source attribution per retrieved chunk is a compliance mandate

- Multi-tenant enterprise deployments where different user groups must see different document scopes

On Databricks, this architecture is implemented natively via Mosaic AI Vector Search, which indexes Delta tables directly. The vector index inherits Unity Catalog governance – meaning the same column-level masking policies, row-level filters, and access grants applied to your structured data extend seamlessly to your embedding store. There is no separate ACL system to maintain.

Databricks’ AI Gateway then provides a centralized inference layer: routing, rate limiting, token logging, and model fallback – all without fragmenting governance across custom middleware.

Fine-Tuning: Behavioral and Stylistic Specialization

Fine-tuning (including PEFT approaches like LoRA/QLoRA) modifies a model’s weights on a domain-specific dataset. It is the correct tool when the failure mode is not missing knowledge, but incorrect model behavior: wrong tone, incorrect output format, failure to follow domain-specific reasoning patterns, or poor performance on highly specialized tasks.

Appropriate fine-tuning use cases in enterprise settings:

- Structured output compliance: forcing consistent JSON schema adherence for a downstream ETL pipeline

- Domain-specific reasoning: medical coding classification (ICD-10/CPT), financial instrument categorization, or contract clause extraction where base models consistently under-perform

- Latency-sensitive inference: a fine-tuned smaller model (e.g., 7B parameters) can match a prompted larger model (e.g., 70B) on a narrow task at a fraction of the serving cost

- Regulatory tone conformance: generating text that structurally mirrors specific legal or compliance standards

On Databricks, Mosaic AI Model Training (formerly MosaicML) provides a managed fine-tuning environment. Training runs on your own compute, data never leaves your VPC, and the resulting model checkpoint is registered in Unity Catalog’s Model Registry with full lineage tracking – connecting training dataset versions, experiment runs in MLflow, and deployed serving endpoints.

The Architectural Decision Matrix

The correct framing is not „RAG versus fine-tuning” but rather „which failure mode am I solving?”

| Criterion | RAG | Fine-Tuning |

|---|---|---|

| Primary problem | Knowledge gap, stale context | Behavioral/output gap |

| Knowledge update frequency | Real-time to daily | Requires retraining cycle |

| Data governance surface | Full Unity Catalog control | Training dataset + model registry |

| Inference cost structure | Higher latency (retrieval + generation) | Lower latency (no retrieval hop) |

| Hallucination risk | Reduced (grounded in retrieved context) | Elevated on out-of-distribution inputs |

| Cold-start effort | Moderate (indexing pipeline) | High (curated training dataset required) |

| Explainability | High (source chunks attributable) | Low (embedded in weights) |

| Regulated industries | Strong alignment | Requires additional audit instrumentation |

Architectural Guidance: Struggling to determine whether your enterprise use case requires RAG, fine-tuning, or a hybrid approach? Explore our Enterprise RAG Architecture Service to see how Dateonic implements production-ready, governed AI systems on Databricks – with Unity Catalog security built in from day one.

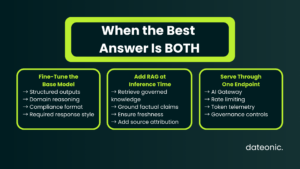

When the Answer is Both: Hybrid Architecture

A common production pattern in regulated industries is fine-tuning for behavior + RAG for knowledge. The canonical implementation:

- A base model is fine-tuned to produce structured outputs, follow domain-specific reasoning chains, or adopt a required response format.

- That fine-tuned model is then augmented with RAG at inference time – retrieving context from a governed vector index to ground factual claims.

This architecture is well-supported in Databricks Model Serving, where a custom Python model wrapper can encapsulate both the retrieval call (via Mosaic AI Vector Search SDK) and the generation call (against the fine-tuned endpoint), exposing a single REST endpoint with AI Gateway rate limiting and token telemetry applied at the boundary.

The pattern is particularly common in Pharma for Medical Information response generation: the fine-tuned model understands the required disclaimer structure and response format; RAG grounds it in current label data indexed from a Delta-backed document store.

RAG Alternatives Worth Architectural Consideration

Before defaulting to RAG, enterprise architects should evaluate several architectural alternatives that may be more appropriate depending on constraints:

- Long-context prompting: Models with 128K–1M token context windows (e.g., Claude, Gemini) may absorb entire knowledge corpora directly for bounded, low-cardinality document sets – eliminating retrieval latency at the cost of higher per-call token spend.

- SQL-backed semantic layers: For structured enterprise data (ERP, CRM, financial records), a Text-to-SQL layer over a governed Databricks SQL Warehouse often outperforms RAG on precision, with full audit trail.

- Knowledge Graph augmentation: For complex multi-hop reasoning (e.g., pharmaceutical drug-interaction chains, financial counterparty graphs), property graph stores with LLM-driven traversal can outperform cosine-similarity retrieval.

- Agent-based tool use: For transactional or multi-step workflows, a structured agent calling governed API tools is more reliable than attempting to encode procedural knowledge into either RAG or a fine-tuned model.

Each alternative carries different latency, governance, and maintenance profiles. The decision must be anchored in the specific failure mode being addressed, not implementation convenience.

Common Architectural Questions

Does RAG completely eliminate the need for fine-tuning?

No. RAG addresses knowledge retrieval gaps but does not modify model behavior. If a base model consistently produces outputs in the wrong format, reasons incorrectly for a domain-specific task, or fails on specialized classification, RAG will not resolve those issues.

Fine-tuning the model’s weights remains the appropriate intervention for behavioral and output-format failures. The two techniques target different failure modes and can operate in combination within the same serving pipeline.

How does Databricks Unity Catalog handle access control for RAG vector indexes?

Mosaic AI Vector Search indexes are created over Delta tables registered in Unity Catalog. Access control is enforced at the catalog/schema/table level using standard GRANT and REVOKE statements – the same permission model as any other Unity Catalog asset.

At query time, the retrieval SDK propagates the caller’s identity; if the backing Delta table has row-level security configured via dynamic views or column-level masking policies, those filters apply to retrieval results automatically. There is no separate ACL layer required for the vector index itself.

When is fine-tuning not cost-justified in an enterprise context?

Fine-tuning is typically not cost-justified when the target task can be solved with well-structured few-shot prompting combined with RAG, when the domain-specific training dataset cannot be curated to sufficient quality and volume (generally 1,000–50,000+ labeled examples depending on task complexity), or when the knowledge to be incorporated changes on a cadence shorter than a feasible retraining cycle.

In those scenarios, a well-architected RAG pipeline on Databricks with a strong base model will deliver better ROI and lower operational overhead.

What are the primary RAG bottlenecks at enterprise scale on Databricks?

At scale, the primary RAG performance constraints are: retrieval latency (mitigated by Mosaic AI Vector Search’s DiskANN-based approximate nearest neighbor index, which serves sub-100ms queries at billion-vector scale); chunk quality (requiring structured chunking pipelines – ideally implemented as Delta Live Tables workflows for lineage tracking); and context window utilization (resolved through reranking with a cross-encoder model before final context injection).

Token budget management at the AI Gateway boundary prevents runaway inference costs across multi-tenant deployments.

Conclusion and Next Steps

The RAG vs. fine-tuning decision is an architectural diagnosis, not a technology preference. RAG is the default architecture for knowledge-intensive, access-controlled, and compliance-sensitive enterprise deployments – and on Databricks, it integrates natively with the governance infrastructure you already operate. Fine-tuning addresses behavioral and output-format failures that retrieval cannot fix, and when combined with RAG in a hybrid architecture, it covers the full range of enterprise LLM production requirements.

The engineering investment should be proportional to the failure mode. Before committing to a fine-tuning cycle, validate that the problem is behavioral rather than informational. Before deploying a RAG pipeline, ensure your retrieval architecture is governed, observable, and latency-validated.

Ready to productionize your AI architecture? Contact Dateonic’s Engineering Team to assess your RAG and fine-tuning strategy, validate your Databricks architecture, and deliver production-ready AI systems that meet enterprise governance requirements.